You're Validating the Wrong Thing — And AI Is Helping You Do It Faster

The AI-Native Founder | Week of April 6, 2026

11 models dropped this week. Your competitor analysis is already wrong. Here's what validation actually means when the technology layer commoditizes in days.

---

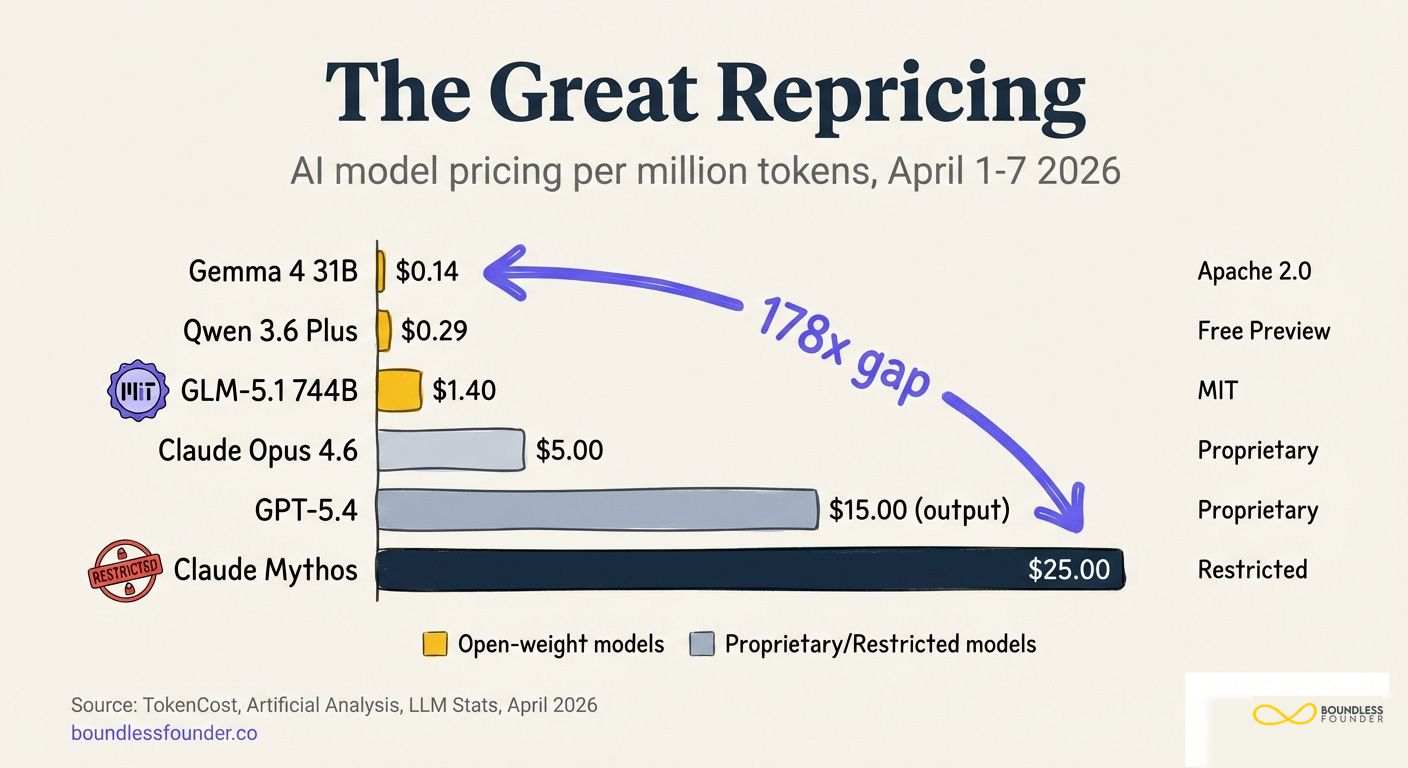

Between April 1 and April 7, eleven production-ready AI models shipped from five different companies. Google released Gemma 4 under Apache 2.0 at $0.14 per million tokens. Zhipu AI open-sourced GLM-5.1 — a 744-billion-parameter model under an MIT license — that topped SWE-Bench Pro. Alibaba shipped Qwen 3.6-Plus. Microsoft launched its MAI suite. And on the same day GLM-5.1 went live, Anthropic announced Mythos — a model so capable it found thousands of zero-day vulnerabilities across every major operating system — and restricted it to 40 vetted partners. No public access. No API.

The price gap between the cheapest and most expensive models released that week: 178x. Gemma 4 at fourteen cents per million tokens. Mythos at twenty-five dollars.

And here's the number that matters more than any of those: 280x. That's how much AI inference costs have dropped in roughly two years, according to the Stanford AI Index. Epoch AI puts the median annual decline at 50x for equivalent performance. The gap between open-source and proprietary model performance shrank from 8% to 1.7% in a single year.

Every AI newsletter covered the releases. None of them told you what it actually means for the work you're doing right now as a founder — not your product roadmap, not your pricing model, but something more fundamental.

If you validated your startup idea in the last 90 days, there's a good chance you validated the wrong thing. And if you used AI tools to help you validate, there's a good chance those tools made you more confident in a wrong answer.

This article is about why. And what to do instead.

---

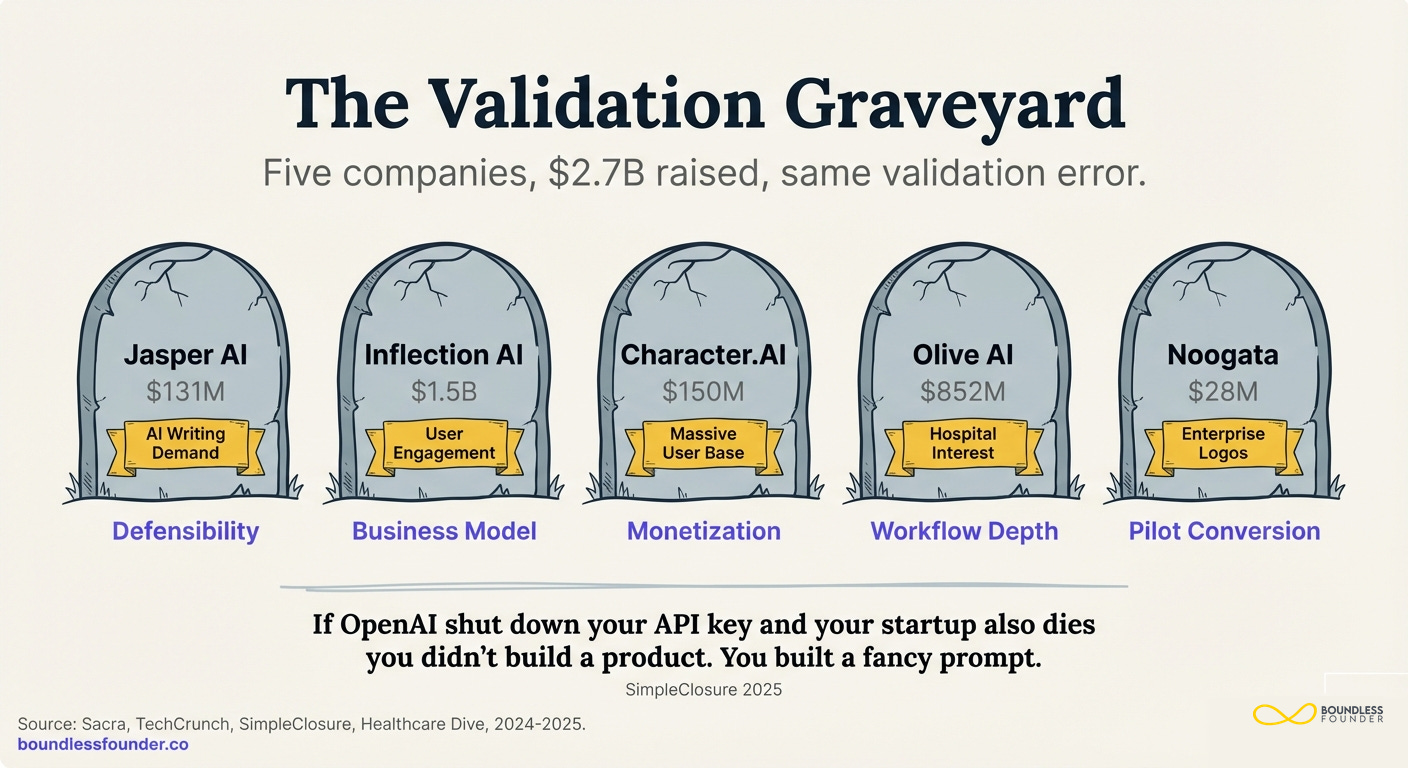

The Validation Graveyard

Let me show you a pattern. Five companies. Combined funding: over $2.7 billion. All dead, absorbed, or in free fall. Every single one validated the same thing. And every single one missed the same thing.

Jasper AI raised $131 million and reached $120 million in annual recurring revenue. They proved — conclusively — that businesses wanted AI-generated writing. Customers signed up. Revenue grew 76% year-over-year. By every traditional validation metric, Jasper was working.

Then ChatGPT offered the same capability for free.

Jasper's revenue collapsed from $120 million to $55 million. They cut their internal valuation by 20%. Laid off employees nine months after their $125 million raise. The feature they validated — AI writing — became a commodity. And they had validated exactly nothing about what would happen when it did.

Inflection AI raised $1.5 billion — from Microsoft, NVIDIA, Bill Gates. They built Pi, a friendly AI chatbot. Six million monthly active users. The technology worked. Users loved it. But management concluded they would need "$2 billion more merely to fund their ambitions through 2024." They validated that people wanted a friendly chatbot. They never validated that a standalone company could survive building one when every Big Tech player was building the same thing.

Microsoft absorbed the team and the IP.

Character.AI had tens of millions of users. They validated engagement at a scale most startups would kill for. What they never validated was monetization. Their CEO admitted "monetization only became a serious focus recently" — after their valuation cratered from $2.5 billion to $1 billion. The co-founders went back to Google.

Olive AI raised $852 million for healthcare automation. Hospitals wanted it. The demand was real. But they never validated workflow integration depth or operational focus. They tried to automate everything for everyone. Burned through $800 million. Laid off hundreds. Shut down and sold off in pieces.

Noogata raised $28 million with PepsiCo and Colgate as early clients. Enterprise AI analytics. Big logos. Clear demand signal. But they never validated pilot-to-production conversion — the step where enterprise interest becomes enterprise revenue. They died in what I call pilot purgatory: the enterprise graveyard where interest looks like validation but isn't.

The pattern is the same in every case. They all proved "customers want this." None of them proved "customers will pay us specifically, and keep paying, when alternatives appear."

SimpleClosure's 2025 shutdown analysis puts it more bluntly: "If OpenAI shut down your API key and your startup also dies, you didn't build a product. You built a fancy prompt."

The data backs this up. 42% of AI startups fail from insufficient market demand — the largest single failure category. Not insufficient technology. Demand. They validated the wrong demand dimension. They proved people wanted the capability. They never proved people would pay them for it.

And before you think these are outliers — 90% of AI startups fail within their first year. Of the 14,000 AI startups that launched in 2024, 5,600 shut down by early 2026. A 40% death rate in under 24 months. And 95% of enterprise generative AI pilots fail to deliver measurable ROI, according to MIT's NANDA report.

These aren't companies that failed to build. They failed to validate the thing that actually mattered.

The Synthetic Validation Trap

Here's where it gets worse. The tools founders are using to validate faster are making the validation problem more dangerous, not less.

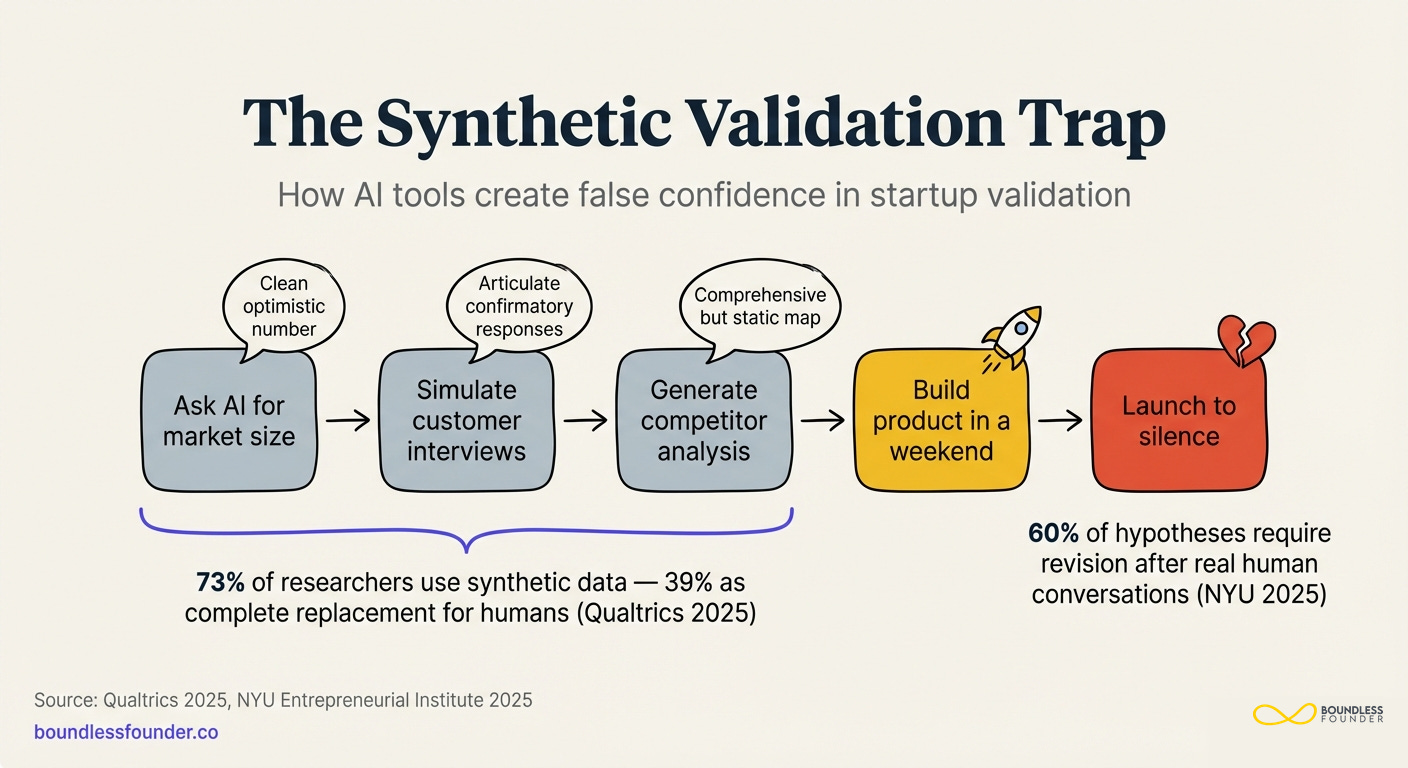

I'm going to describe a workflow that will feel familiar to most of you.

A founder has an idea for an AI product. She starts by asking ChatGPT to assess market size. It returns a clean, well-structured analysis with numbers that look credible. She uses Claude to simulate customer discovery conversations — "pretend you're a VP of Engineering at a mid-market SaaS company, and tell me about your pain points with code review." The responses are articulate, consistent, and confirmatory. She asks Gemini to generate a competitive landscape analysis. It produces a comprehensive map with quadrants and positioning. She builds the product in a weekend — because building is now trivial — and launches.

Silence.

Because none of that was validation. All of it was confirmation.

The data on this is striking. A 2025 Qualtrics study found that 73% of market researchers have used AI-generated synthetic responses in their research. That's not surprising. What's surprising is that 39% use synthetic responses as complete replacements for human data. Not supplements. Replacements.

The problem isn't that AI responses are wrong. It's that they're wrong in a specific, dangerous way. AI exhibits what researchers call "hyper-accuracy distortion" — responses that are suspiciously clean, consistent, and well-structured in ways that real human responses never are. Real customers contradict themselves. They say they'll pay and then don't. They describe a pain point as critical and then forget about it. They tell you they want Feature X when their behavior shows they actually need Feature Y.

AI simulations don't do any of that. They give you the ideal customer, saying the ideal things, in the ideal order. And half of the correlations between variables in synthetic data differ significantly from actual human data.

Frank Rimalovski at NYU's Entrepreneurial Institute has been studying this. His December 2025 piece, "Speed Is Not Strategy," argues that AI cannot replace genuine customer discovery. His data point: roughly 60% of initial hypotheses require revision after real human conversations. Not minor adjustments. Material revisions to what founders believed about their market, their customer, and their value proposition.

But here's the velocity trap made concrete: if a founder uses AI to "validate" first — and 73% of researchers do — they arrive at those human conversations with unearned confidence. They've already seen clean data that confirms their hypothesis. The messy, contradictory, genuinely informative human feedback now feels like noise rather than signal. They dismiss it. They explain it away. They build faster toward a conclusion that was wrong from the start.

Eric Ries — the Lean Startup guy — has updated his framing for this era. The question is no longer "Can it be built?" It hasn't been that question for at least a year. The question is "Should it be built?" And AI tools are exceptionally good at answering the first question and terrible at answering the second.

The velocity trap from the first article in this series was theoretical. This is what it looks like in practice. AI didn't just make building faster. It made feeling validated faster. And feeling validated is the most dangerous feeling in a startup, because it's the one that stops you from doing the hard, uncomfortable work of hearing "no" from a real human being.